In October 2012 I started using bcache as an SSD caching solution for my Debian Linux server. I’ve been very happy about it so far. Back then I used a manually compiled 3.2 Linux kernel based on the bcache-3.2 git branch provided by Kent (which has been removed). This patch needed to be applied to make bcache work with grsecurity. I also created a Debian package of the bcache-tools userspace tools to be able to create the bcache setup.

At the start of this year I moved to a 3.12 kernel, also manually compiled. It’s quiet a relief that bcache is included in mainline since the 3.10 kernel. 🙂

This is my setup:

- 500GB backing device – 20GB caching device (qcow2 images)

- 1.3TB backing device – 36GB caching device (file storage)

The past year I’ve definitely noticed the performance difference using bcache. But I was still curious about when and how bcache was using the attached SSD. Is it using the write-back cache a lot? How many times can bcache read it’s data from the SSD cache instead of accessing the HDD?

I created a python script to collect all kinds of bcache statistics (parts of the code in this script are copied from bcache-status). This script outputs the statistics to STDOUT in a collectd exec plugin compatible way. The collectd exec plugin can be configured in collectd.conf this way:

<Plugin exec>

Exec "user:group" "/path/to/collectd-bcache"

</Plugin>

To visualize the collected data I created a bcache plugin for CGP. This is the result:

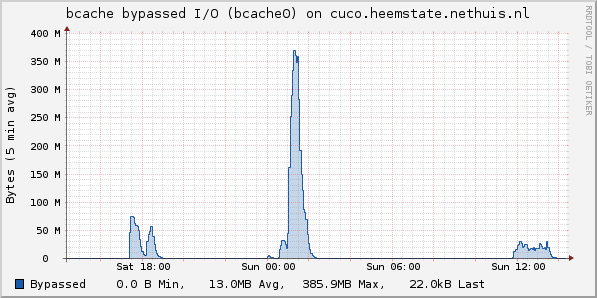

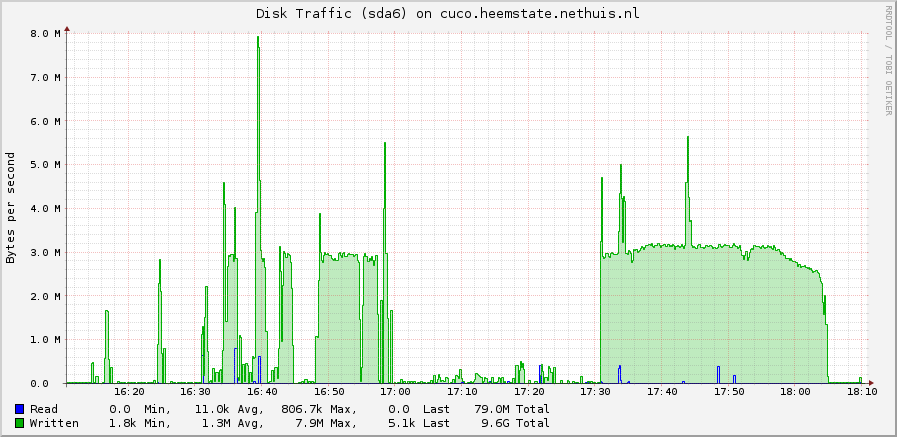

Write-back to HDD throttled

At some time I noticed that in my case flushing data from the write-back cache to the HDD was somehow rate-limited to ~3 MB/s. You can nicely see this in these graphs:

These threads on the mailinglist of bcache mention the same thing:

Kent explained that this is managed by the PD controller in bcache. The PD controller has been rewritten in the 3.13 Linux kernel, so I’m very interested if this behavior changed. I didn’t upgrade my kernel to 3.13 yet because I’m a very cautious about it. Still a lot of development is going on at the bcache project. But I’m looking forward to upgrading to 3.13, 3.14 or probably 3.15.

collectd-bcache: github.com/pommi/collectd-bcache

CGP bcache plugin: github.com/pommi/CGP/…/bcache.json